TL;DR:

- Technology law affects all businesses using digital tools and data in Europe by covering data protection, AI, cybersecurity, and intellectual property.

- EU regulations like GDPR and the AI Act impose strict obligations with significant penalties, requiring proactive compliance from leadership.

- Effective compliance requires board-level responsibility, risk mapping, legal guidance, and ongoing monitoring, especially for SMEs facing overlapping rules.

Technology law is not a niche concern reserved for Silicon Valley giants or platform monopolies. It governs the daily operations of every business that uses software, processes data, deploys AI tools, or communicates digitally, which in 2026 means virtually every company in Europe. Information technology law covers a far wider terrain than most business leaders realise, spanning data protection, intellectual property, cybersecurity, contracts, and fundamental rights. For technology-driven companies operating in or entering the European market, the regulatory landscape is dense, fast-moving, and consequential. This guide sets out the core frameworks, real compliance challenges, and practical steps that leaders need to understand and act on.

Table of Contents

- The foundations of technology law: What it covers and why it matters

- Core EU technology law frameworks: GDPR, AI Act, and more

- Practical challenges: Overlaps, ambiguities, and SME roadblocks

- Global comparisons and critical perspectives: Is the EU model stifling or safeguarding?

- A realistic take: What leaders actually need to do next

- How expert legal guidance secures your tech business

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Tech law is broad | Technology law covers IT, data, AI, contracts, and privacy, affecting all tech-driven businesses. |

| EU regulations matter | Laws like GDPR and the AI Act set strict compliance duties for companies across Europe. |

| Overlaps increase risk | Multiple EU laws often overlap, making compliance complex but essential for competitiveness. |

| Leadership is key | Leaders must prioritise regulatory readiness, not leave tech law to only IT or legal teams. |

| Expert advice helps | Professional guidance streamlines compliance and gives businesses a strategic advantage in tech law. |

The foundations of technology law: What it covers and why it matters

Technology law, sometimes referred to as ICT law or IT law, is the body of legal rules that governs how information technology is created, used, shared, and protected. It is not a single statute. It is an interconnected set of legal domains that together shape how businesses can operate in a digital environment.

According to its established definition, technology law regulates computing, software, artificial intelligence, the internet, data, communications, and cybersecurity, with direct intersections across intellectual property, contract law, privacy law, criminal law, and fundamental rights. That breadth is precisely what makes it strategically significant for leadership teams, not just legal departments.

The key domains where technology law touches business operations include:

- Data protection and privacy: Rules governing how personal data is collected, stored, and processed

- Intellectual property: Software copyright, patent eligibility for algorithms, and trade secret protections

- Contractual obligations: Licensing agreements, SaaS terms, and supplier contracts for technology services

- Cybersecurity law: Mandatory incident reporting, resilience standards, and liability for breaches

- AI regulation: Risk classification, transparency requirements, and prohibited use cases

- Consumer and platform law: Obligations for digital services directed at end users

A common misconception is that technology law only concerns IT departments or large technology firms. In practice, a manufacturing company using a connected supply chain platform, a healthcare provider deploying diagnostic software, or a financial services firm using automated credit scoring all fall squarely within its scope. Understanding the legal risks for tech companies is therefore a board-level responsibility, not an operational afterthought.

"Technology law is not a compliance checkbox. It is the legal architecture within which every technology-driven business decision is made."

Protecting business confidentiality essentials such as source code, proprietary algorithms, and client data sits at the intersection of technology law and commercial strategy. Leaders who treat these as purely technical matters expose their organisations to significant legal and reputational risk.

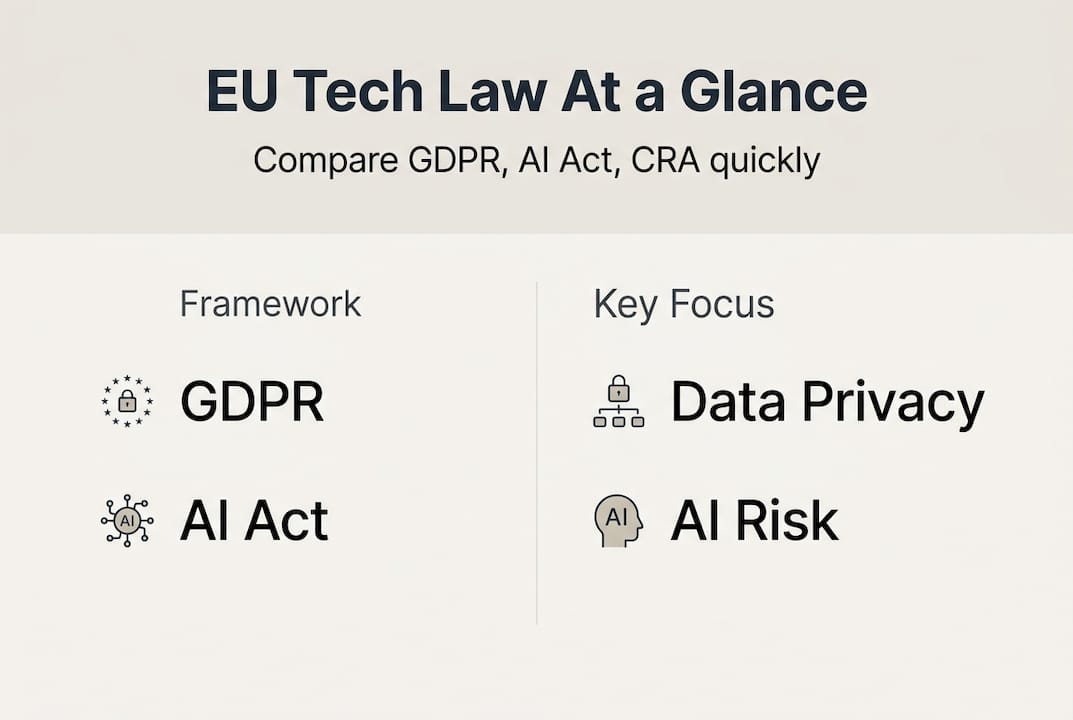

Core EU technology law frameworks: GDPR, AI Act, and more

The European Union has built one of the most extensive technology law frameworks in the world. For businesses operating in or selling into the EU market, these regulations carry direct legal obligations, regardless of where the company is headquartered.

The EU technology law framework includes six primary instruments that leaders must understand:

| Regulation | Focus area | Who it applies to | Key obligation | Maximum penalty |

|---|---|---|---|---|

| GDPR | Personal data | All processors of EU resident data | Lawful basis, data subject rights | €20 million or 4% global turnover |

| AI Act | Artificial intelligence | Providers and deployers of AI systems | Risk classification, conformity assessment | Up to 7% global turnover |

| Data Act | Non-personal data | Connected device manufacturers, data holders | Data sharing obligations | Member state defined |

| DSA/DMA | Digital platforms | Online platforms and gatekeepers | Transparency, interoperability | 6% global turnover (DMA) |

| CRA | Cyber resilience | Manufacturers of digital products | Security by design, vulnerability reporting | €15 million or 2.5% global turnover |

| NIS2 | Network and information security | Essential and important entities | Incident reporting, risk management | €10 million or 2% global turnover |

The AI Act deserves particular attention. It introduces a risk-based approach with four tiers: unacceptable risk (prohibited), high risk (strict obligations), limited risk (transparency duties), and minimal risk (largely unregulated). Lifecycle compliance is required, meaning obligations apply from design through deployment and ongoing monitoring. Non-EU companies placing AI systems on the EU market must also appoint a local EU representative.

For GDPR compliance for leaders, the core obligation remains ensuring that any processing of personal data has a lawful basis, that data subjects can exercise their rights, and that breaches are reported within 72 hours. These are not IT-level tasks. They require governance structures, documented policies, and board-level accountability.

The practical steps for building a compliant foundation include:

- Map all personal data flows across the business

- Classify AI systems by risk tier under the AI Act

- Review supplier contracts for data processing clauses

- Establish an incident response protocol for cyber events

- Appoint a Data Protection Officer where required

- Review evidence on EU AI regulation778577_EN.pdf) to stay current on implementation timelines

Pro Tip: Start with an inventory of every AI tool and data-processing system used across the business. This single exercise surfaces the majority of compliance gaps and is the foundation for any credible regulatory mapping.

For companies still developing their approach, legal compliance for growth is not a constraint on ambition. It is the infrastructure that makes sustainable scaling possible. Explore more tech law insights to build a fuller picture of obligations relevant to your sector.

Practical challenges: Overlaps, ambiguities, and SME roadblocks

Understanding individual regulations is one thing. Navigating their interaction is considerably harder. Regulatory overlaps between GDPR, the AI Act, and the Cyber Resilience Act create compounded obligations that require simultaneous compliance across multiple legal regimes.

Consider a company deploying an AI-driven HR recruitment tool. It must classify the system as high-risk under the AI Act, ensure GDPR compliance for candidate data processing, meet CRA security standards if the tool is embedded in a software product, and potentially comply with NIS2 if the organisation qualifies as an important entity. Each regulation has its own documentation requirements, audit trails, and reporting timelines.

| Scenario | GDPR obligation | AI Act obligation | CRA obligation |

|---|---|---|---|

| AI recruitment tool | Lawful basis, data minimisation | High-risk conformity assessment | Security by design |

| Connected IoT device | Data processing agreements | Risk classification | Vulnerability disclosure |

| Automated credit scoring | Automated decision-making rules | High-risk registration | Incident reporting |

Ambiguities compound the challenge. Court outcomes on AI copyright778577_EN.pdf) training data differ between jurisdictions, with the Munich and Hamburg courts reaching divergent conclusions on text and data mining opt-outs. Risk tier classification for novel AI applications remains contested, leaving businesses in legal uncertainty.

"Forty-five European organisations formally called for simplification of the cumulative regulatory burden, citing the disproportionate impact on smaller firms."

For SMEs and scaling startups, the barriers are particularly acute. Legal uncertainty raises the cost of market entry. Compliance resourcing strains limited teams. And the pace of regulatory change means that a policy written in 2024 may already require revision by 2026.

Practical steps for navigating intersecting obligations include:

- Appoint a cross-functional compliance lead who bridges legal, technical, and product teams

- Use the compliance checklist for SMEs as a structured starting point

- Map obligations by system and data type, not by regulation in isolation

- Engage with national regulatory sandboxes to test compliance approaches before full deployment

- Review the startup compliance essentials relevant to your jurisdiction and sector

- Monitor EU digital compliance essentials for sector-specific updates

Global comparisons and critical perspectives: Is the EU model stifling or safeguarding?

The EU's approach to technology law is fundamentally precautionary. It prioritises rights protection, risk mitigation, and regulatory certainty. The United States, by contrast, has historically favoured a lighter-touch model, relying on sector-specific rules and market correction rather than horizontal regulation. Asian jurisdictions vary considerably, with China pursuing aggressive state-led digital governance and other markets adopting hybrid approaches.

Critics of the EU model raise legitimate concerns:

- Heavy compliance costs disadvantage European startups competing with less-regulated US or Asian counterparts

- Regulatory fragmentation across member states creates inconsistency despite harmonisation goals

- Overly broad definitions create legal uncertainty, particularly for novel AI applications

- The pace of regulation may outstrip the capacity of regulators to enforce it coherently

Supporters of the EU approach make an equally credible case:

- Strong data protection rules build consumer trust, which translates into commercial advantage

- Legal certainty over the long term reduces litigation risk and supports institutional investment

- Market harmonisation across 27 member states creates a unified regulatory environment that simplifies cross-border scaling

- Research on digital law perspectives suggests no proven causal link between regulatory depth and reduced innovation output

The false choice between regulation and innovation is a framing that serves neither side well. European businesses operating under GDPR have built globally competitive products. The compliance infrastructure required by EU law can, when implemented strategically, become a differentiator rather than a burden.

Pro Tip: Rather than treating EU compliance as a cost centre, position it as a trust signal. Enterprise clients, institutional investors, and public sector partners increasingly treat demonstrable compliance as a procurement requirement. For companies pursuing cross-border compliance, this reframing has direct commercial value. The legal guide for cross-border business provides a practical starting point for firms entering new European markets.

A realistic take: What leaders actually need to do next

The most persistent mistake business leaders make is treating technology law as a delegable technical matter. It is not. Board-level accountability for data governance, AI risk, and cybersecurity resilience is now embedded in multiple EU regulations. Delegating it entirely to legal or IT teams creates governance gaps that regulators and courts will not overlook.

The hard-won lesson from organisations that navigate this well is straightforward: start with an AI and data inventory, prioritise risk mapping across the full system lifecycle, and embed regulatory updates into board-level decision-making cycles. Regulatory sandboxes offered by national authorities provide a structured environment to test compliance approaches without full market exposure. Cross-functional compliance roles, bridging product, legal, and technical teams, are more effective than siloed legal review.

Do not chase perfect compliance. No organisation achieves it. Instead, build systems for tracking regulatory change, documenting decisions, and demonstrating good-faith effort. Regulators consistently treat documented, proactive compliance programmes more favourably than reactive responses to enforcement. The board-level GDPR strategies that work are those built into governance structures, not bolted on as an afterthought.

How expert legal guidance secures your tech business

Technology law's complexity does not diminish as a business scales. It compounds. Each new market, product line, or data-processing activity introduces fresh obligations that intersect with existing frameworks in ways that are rarely straightforward.

Expert legal advisory support helps technology-driven businesses map their obligations accurately, prioritise risk, and build compliance programmes that hold up under regulatory scrutiny. At Vucic.legal, the focus is on practical, strategic guidance that aligns legal compliance with business objectives. Whether you are navigating corporate law for leaders, structuring cross-border operations, or building an AI governance framework, strategic legal services tailored to technology businesses provide the clarity and confidence that leadership teams need to move forward decisively.

Frequently asked questions

What is technology law in simple terms?

Technology law is the body of law that regulates computing, software, data, and the internet, covering how these are used, protected, and governed across business and society.

Do EU technology laws only affect large companies?

No. EU tech law applies to all technology-driven businesses, including SMEs and startups, with cumulative obligations that can be proportionally more burdensome for smaller firms.

What is the difference between the AI Act and GDPR?

The AI Act governs the development and deployment of artificial intelligence through a risk-based classification system, while GDPR sets the rules for collecting, processing, and protecting personal data.

What are the main compliance risks under EU technology law?

Firms face fines reaching 7% of global turnover for AI Act breaches, alongside significant GDPR and CRA penalties, with overlapping obligations raising the overall complexity and risk exposure.

How can business leaders stay on top of tech law changes?

Leaders should prioritise AI inventories, conduct regular staff training, and schedule structured legal reviews with professional advisers who track regulatory timelines and enforcement trends.